Reliable Battery Analytics Begins Before the Model

Advanced battery analytics depends not only on the sophistication of analytical models, but also on the quality and interpretability of the underlying telemetry. Even highly capable analytical models can produce unreliable outputs when incoming data is incomplete, inconsistent, noisy, or difficult to interpret operationally.

In real-world operating environments, data quality directly influences the reliability of KPIs, alarms, notifications, and operational recommendations. Without appropriate handling, poor-quality data can increase false alarms, obscure important signals, and reduce confidence in analytical insights.

This is why smart pre-processing and post-processing are essential to transforming raw telemetry into actionable battery insights.

1. From Raw Data to Actionable Insights

Turning raw telemetry into actionable insights requires multiple stages of preparation, validation, and interpretation throughout the analytics pipeline.

The data processing workflow behind PowerUp’s Battery Analytics solution is built around four key stages:

1. Raw telemetry: Time-series data collected in the field (current, voltage, temperature, SoC).

2. Pre-processed telemetry: A curated and validated time series where non-physical or inconsistent data has been identified and removed.

3. Analytical outputs: Core analytical results, including KPIs such as safety, performance and endurance metrics, and alarms.

4. Post-processed insights: Final KPIs, alarms, and notifications, refined to remove noise and highlight what truly matters.

In parallel, a dedicated configuration layer defines algorithm parameters, asset architecture, and system topology, ensuring that analytics remain fully contextualized to each customer’s fleet:

2. Pre-Processing: Preparing Telemetry for Reliable Analytics

Preparing telemetry for reliable analytics is not simply a matter of removing bad data points. The objective is to improve the consistency, interpretability, and usability of telemetry while preserving the meaningful signals required for downstream analytical models and operational insights.

Pre-processing is not a simple filtering exercise. It is a carefully balanced compromise, grounded in strong domain expertise:

- Remove too much data, and you risk losing critical information about battery behavior;

- Keep everything, and you expose analytics to false alarms, misleading KPIs, and a loss of user trust.

This is where PowerUp’s intellectual property and experience in electrochemistry, data science, and data engineering come into play. Our pre-processing logic is designed to retain only physically meaningful data for the specific purpose of the target analytical models, while preserving both long-term trends and short-lived but important transient events.

3. Measuring Data Quality: Transparency by Design

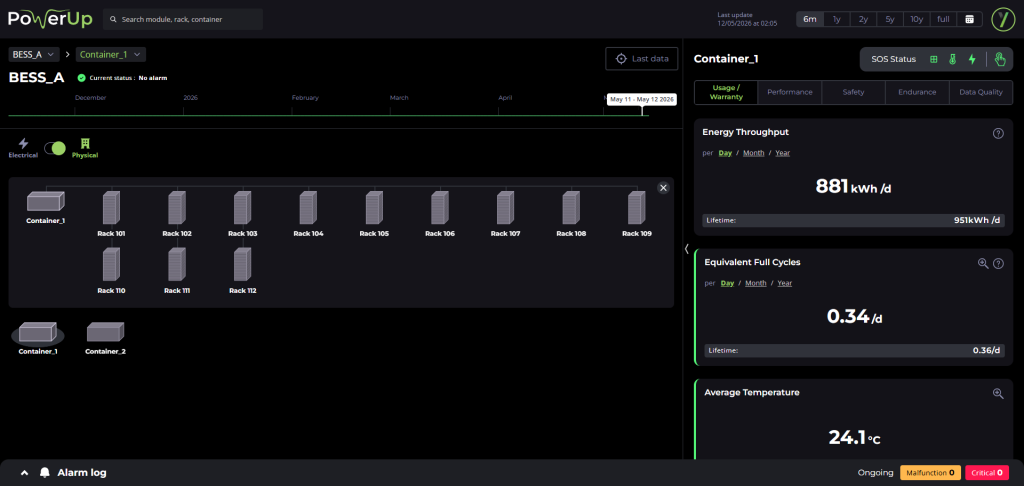

To make data quality both visible and actionable, PowerUp provides a dedicated Data Quality section within Battery Insight. Structured around three metrics, it helps users better understand telemetry reliability and interpret analytical outputs with the appropriate operational context.

3.1 Data Completeness — Did we receive the expected data?

This metric represents the percentage of expected telemetry points that were successfully collected and received. It helps quickly identify communication gaps, sensor outages, or data acquisition issues that may affect downstream analytics.

3.2 Data Consistency — How much of the received data can actually be used?

Not all received telemetry can be safely used for analytics. Some data points must be removed because they are non-physical, inconsistent, or otherwise unreliable. Data consistency reflects the percentage of received telemetry that remains valid after pre-processing and can safely support downstream analytical models.

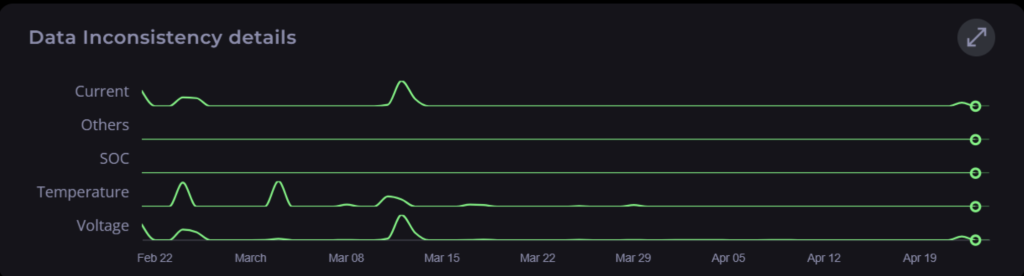

3.3 Data Inconsistency Details — Why was data removed?

During the pre-processing phase, each removed data row is flagged according to the physical metric responsible for the inconsistency, such as:

- Voltage

- Current

- Temperature

- State-of-Charge (SoC)

- Others (contextual signals)

These flags are aggregated daily and displayed as a timeline, providing a clear and intuitive view of telemetry data quality evolution over time.

The timeline consists of five parallel tracks, each corresponding to a key metric (SoC, voltage, current, temperature).

- Line height indicates the volume of inconsistent data for a given day:

- Line close to the bottom: few inconsistencies

- Line close to the top: many inconsistencies

Interpreting the Timeline

The timeline is designed to help users quickly identify recurring telemetry quality issues, isolated anomalies, and long-term trends that may affect analytical reliability.

- A consistently non-zero line for a given metric may indicate a recurring issue (for example, a faulty sensor).

- Sudden spikes typically point to a specific event or anomaly.

- Minimal variation over time suggests stable and reliable data quality.

This visualization provides a fast and intuitive way to monitor telemetry consistency, detect patterns, and support informed decision-making without requiring deep data expertise.

4. Post-Processing: Refining Analytical Outputs for Reliable Insights

Even with perfectly pre-processed telemetry, analytics can still suffer from noise, transient artifacts, or context-specific edge cases. This is why post-processing is just as critical as pre-processing.

Post-processing applies expert-defined rules to:

- Filter out residual noise

- Aggregate signals in a meaningful and operationally relevant way

- Prevent false positives

- Preserve early warning signals

Once again, success lies in finding the right balance: removing what is irrelevant while keeping what is significant. Achieving this balance requires a combination of battery physics expertise, data science, and data engineering expertise.

5. Addressing Real-World Data Challenges at Scale

Operational battery data is inherently messy:

- No two customers collect telemetry in exactly the same way

- No two data quality issues look alike

- Edge cases are the norm, not the exception

PowerUp’s approach is designed to scale across diverse operational environments while minimizing the need for costly and client-specific data pre-processing.

Our data pipeline includes:

Formatting: standardizing telemetry data structures, units, identifiers, and naming conventions

Filtering: removing non-physical values (out-of-range signals, flat lines, abnormal derivatives, etc.)

Enhancement: resampling, phase detection, and intermediate computations supporting downstream analytical models

6. Concrete Benefits

Providing transparency into data quality delivers tangible value:

- Customers clearly distinguish between analytical platform limitations and underlying telemetry quality issues

- Data quality metrics help reveal broader weaknesses in telemetry data acquisition systems and processes

- Confidence in KPIs, alarms, and notifications is significantly strengthened

Conversely, without robust pre-processing and post-processing:

- False alarms become more frequent

- Important signals may be missed

- Users may misinterpret missing insights as a platform failure

In many cases, poor telemetry quality observed by PowerUp acts as a proxy for broader monitoring and instrumentation issues across the customer’s ecosystem.

Conclusion

Deep battery analytics is not only about smarter algorithms and models; it also depends on intelligent telemetry handling throughout the analytics pipeline.

By combining transparent data quality metrics, expert-driven pre-processing, and intelligent post-processing, PowerUp ensures that analytics are built on solid foundations.

The result is clear: more reliable insights, fewer false positives, and greater confidence in every decision powered by battery data.